Aug 2025 - Dec 2025

EchoWear

Swift-based iOS/watchOS prototype for voice-driven wearable interaction.

EchoWear is a Swift-based iOS/watchOS prototype that explores wearable-first voice monitoring experiences. The project includes a modern SwiftUI sign-in flow, a voice monitor surface, configurable speech recognition behavior, microphone-driven listening through AVFoundation, and supporting structure for Apple Watch-related work.

Real project asset

Voice-Driven Wearable Prototype

SwiftUI Sign In

Voice Monitor

Speech Recognition

Microphone Flow

Highlight

SwiftUI App

Highlight

Speech Recognition

Highlight

Wearable-Focused UI

Highlight

iOS Simulator Ready

Executive Summary

EchoWear is a Swift-based iOS/watchOS prototype that explores wearable-first voice monitoring experiences. The project includes a modern SwiftUI sign-in flow, a voice monitor surface, configurable speech recognition behavior, microphone-driven listening through AVFoundation, and supporting structure for Apple Watch-related work.

Problem Statement

Voice-driven mobile and wearable interactions need a simple interface, permission-aware microphone access, and clear feedback so users can experiment with hands-free workflows without complex touch navigation.

What I Built

SwiftUI sign-in interface

Voice monitor surface

Speech recognition support

Microphone listening flow

Keychain-based credential storage

iOS simulator support

How It Works

A conceptual workflow showing how the project moves from input to processing and output.

Step 1

User Opens App

Step 2

Authentication Flow

Step 3

Microphone Permission

Step 4

Speech Recognition

Step 5

Voice Monitor Feedback

Step 6

Future Wearable Actions

Architecture / System Design

A simplified system view of the major project components and how responsibilities connect.

Step 1

SwiftUI App

Step 2

Authentication Manager

Step 3

Speech Recognizer

Step 4

AVFoundation Audio Input

Step 5

Keychain Storage

Step 6

watchOS Project Structure

Technical Implementation

Mobile UI

- SwiftUI screens

- Modern sign-in flow

- Wearable-focused visual style

Speech + Audio

- Apple Speech framework

- Microphone listening flow

- AVFoundation audio input

Authentication

- Demo Apple/email/password options

- Keychain credential storage

- CryptoKit password hashing

Platform

- Xcode project

- iOS simulator support

- watchOS-related structure

Screenshots & Visuals

Real project screenshots and outputs appear first. Where a project has no existing screenshots, the visuals are grounded diagrams or output previews based on the actual project structure.

SwiftUI Sign-In Screen

Actual EchoWear iOS screenshot from the project README showing the clean authentication entry point.

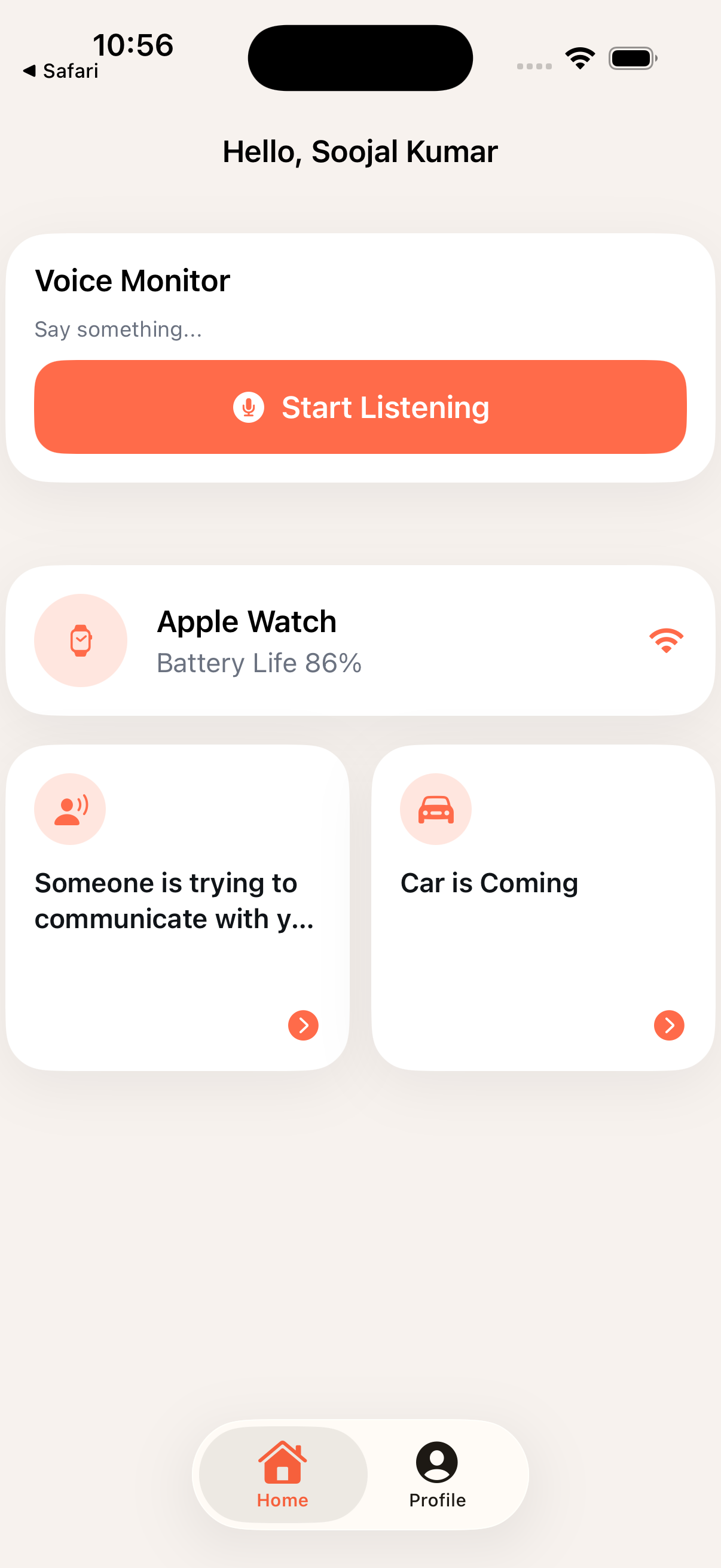

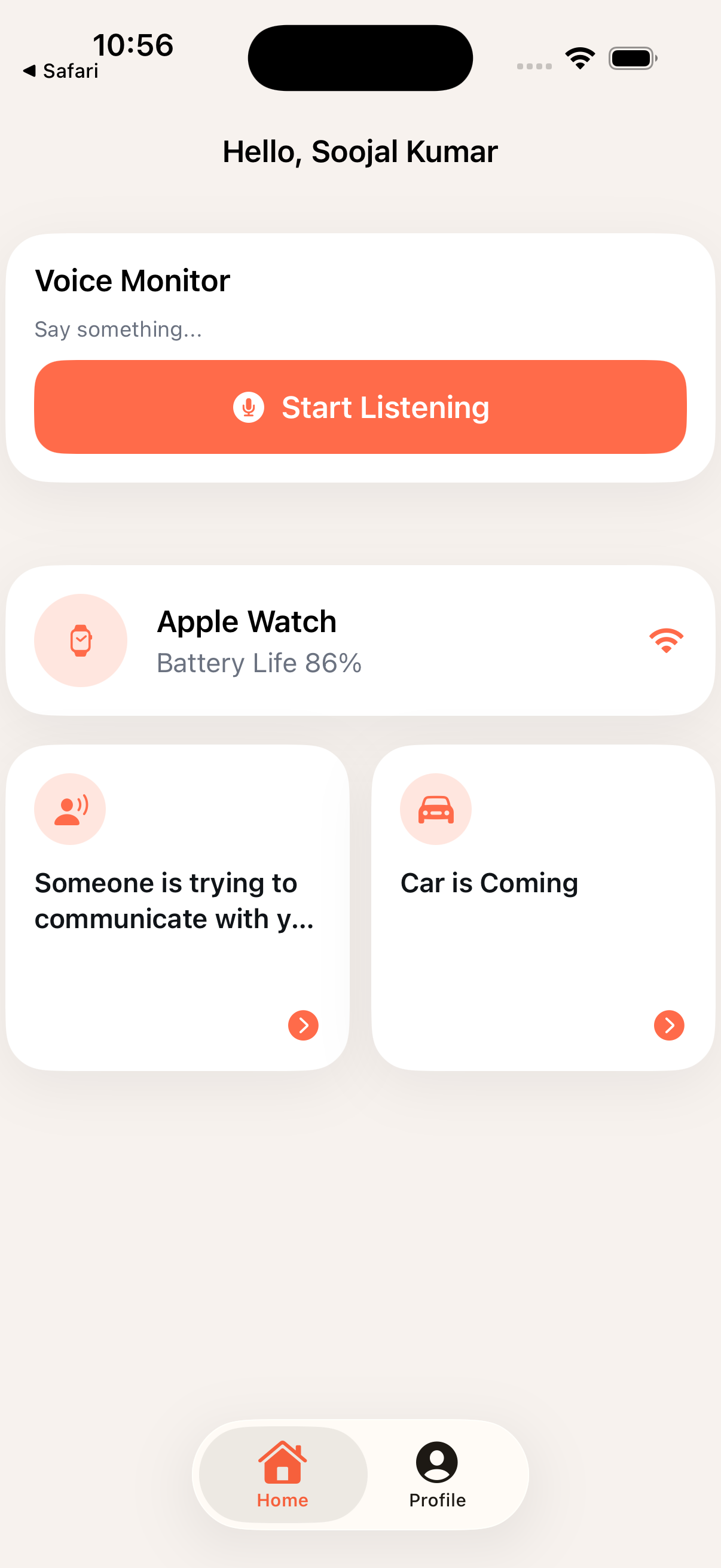

EchoWear Home Surface

Actual app screenshot showing the main EchoWear interface for the wearable-first voice monitoring prototype.

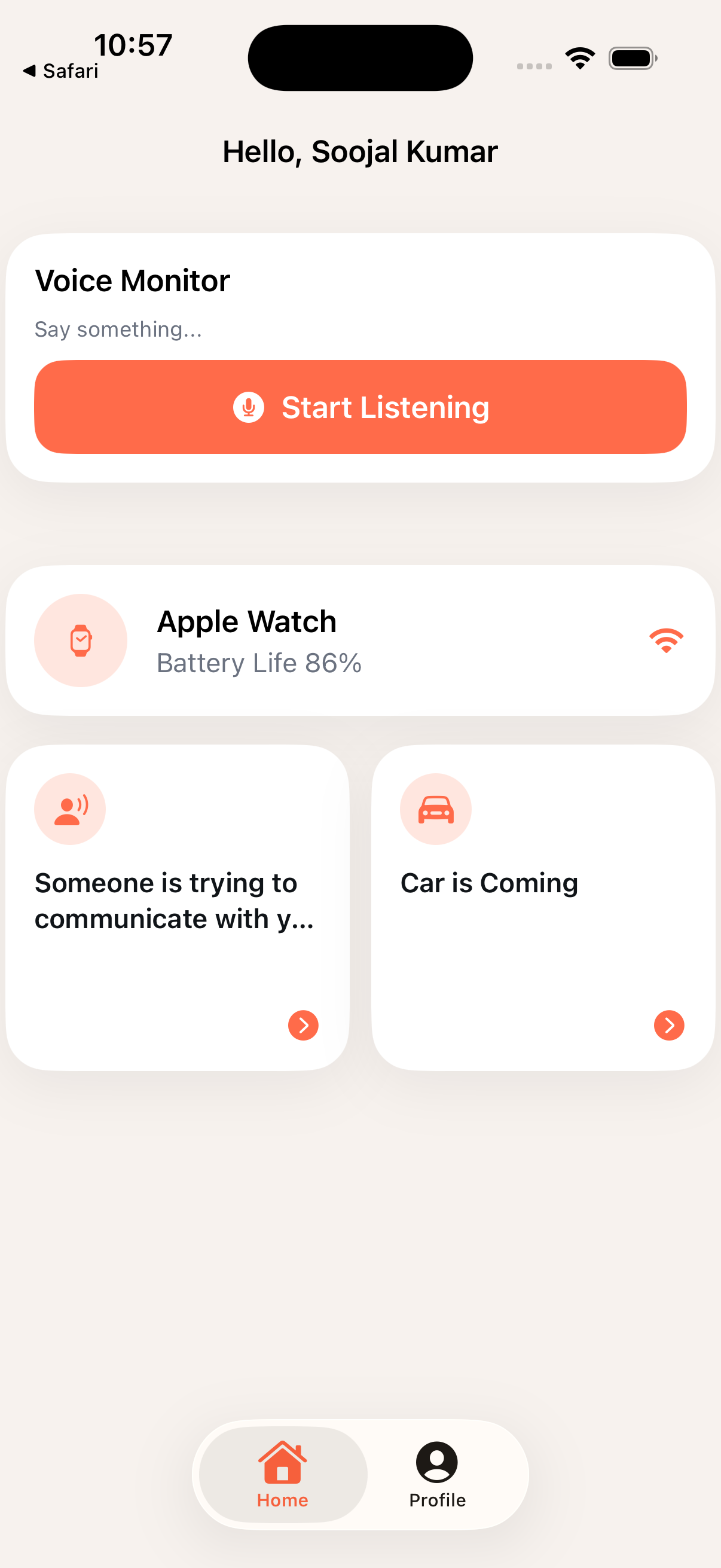

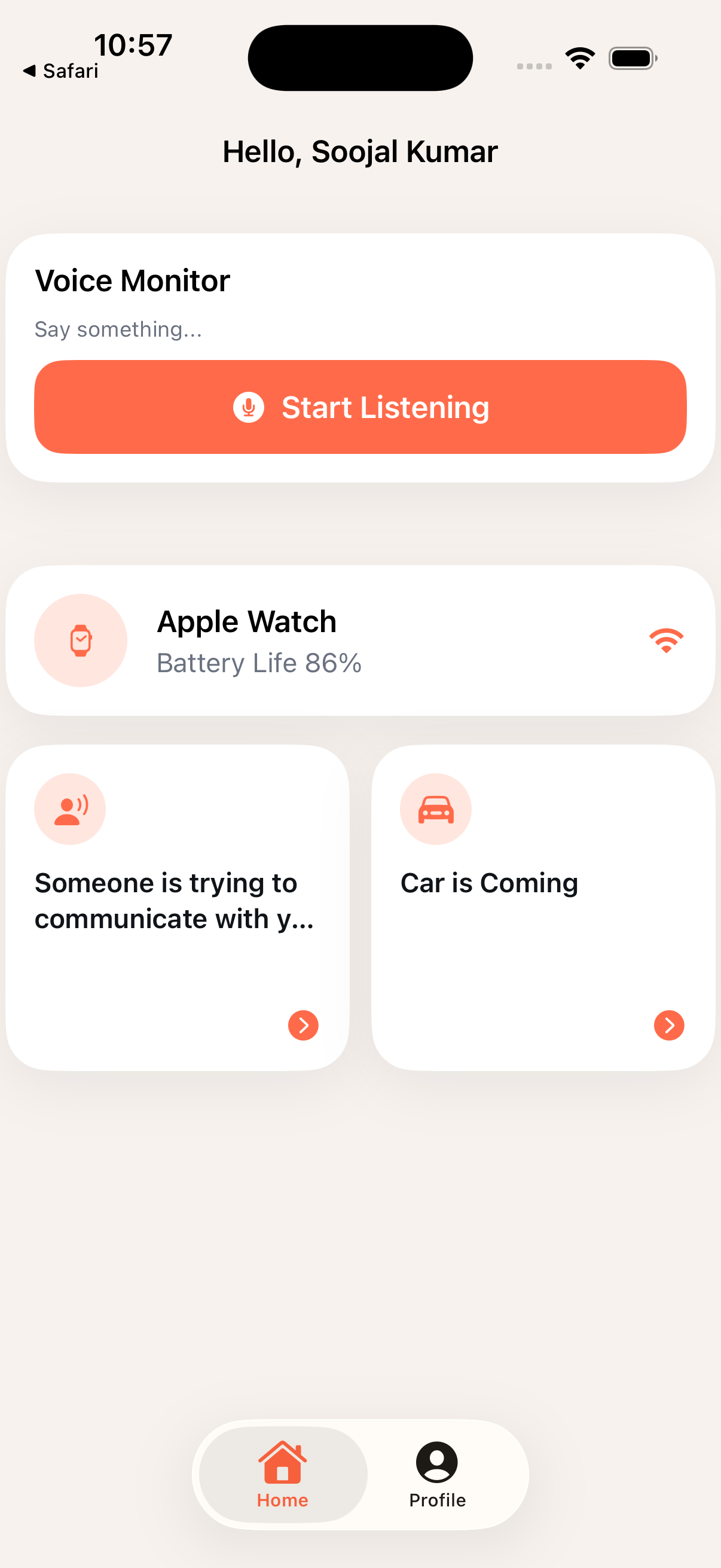

Speech Recognition Screen

Actual app screenshot of the speech recognition surface that connects microphone input with voice-driven interaction.

Voice Interaction Flow

Grounded workflow visual showing how voice input moves through AVFoundation, Apple's Speech framework, and UI feedback.

Voice Flow Preview

Open EchoWear

Authorize microphone + speech recognition

Start listening

Recognized speech updates the voice monitor interfaceChallenges & Solutions

Challenge

Hands-free interactions require microphone access, speech permissions, and a clean UI state model.

Solution

Structured the prototype around permission-aware audio input, speech recognition, and a clear voice monitor surface.

Challenge

A wearable concept needs to stay simple while leaving room for deeper watchOS behavior.

Solution

Built the iOS prototype with watchOS-related project structure and modular Swift files for continued expansion.

Results / Impact

Demonstrates mobile and wearable prototyping with native Apple frameworks.

Shows ability to combine UI, authentication, permissions, speech recognition, and audio workflows in a practical app structure.